Three-Point Direct Stereo Visual Odometry

– Published Date : September 19-22, 2016

– Category : Visual Odometry

– Place of publication : 27th British Machine Vision Conference (BMVC)

Abstract:

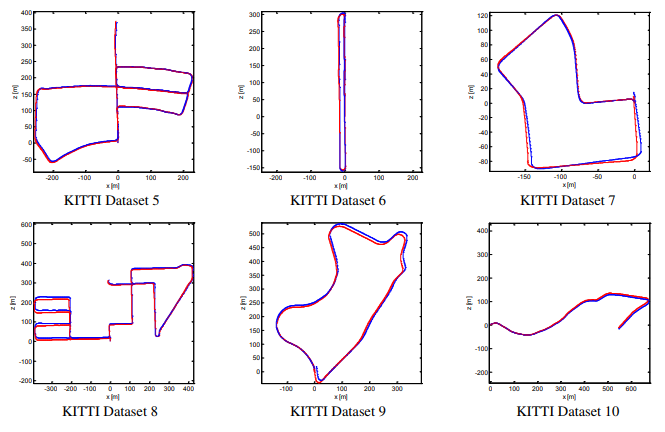

Stereo visual odometry estimates the ego-motion of a stereo camera given an image sequence. Previous methods generally estimate the ego-motion using a set of inlier features while filtering out outlier features. However, since the perfect classification of inlier and outlier features is practically impossible, the motion estimate is often contaminated by erroneous inliers. In this paper, we propose a novel three-point direct method for stereo visual odometry, which is more accurate and robust to outliers. To improve both accuracy and robustness, we consider two key points: sampling a minimum number of features, i.e., 3 points, and minimizing photometric errors in order to maximally reduce measurement errors. In addition, we utilize temporal information of features, i.e., feature tracks. Local features are updated by the feature tracks and the updated feature points improve the performance of the proposed pose estimation. We compare the proposed method with other state-of-the-art methods and demonstrate the superiority of the proposed method through experiments on the KITTI benchmark.