Learning Icosahedral Spherical Probability Map Based on Bingham Mixture Model for Vanishing Point Estimation

– Published Date : TBD

– Category : Vanishing point estimation

– Place of publication : IEEE/CVF International Conference on Computer Vision (ICCV) 2021

Abstract:

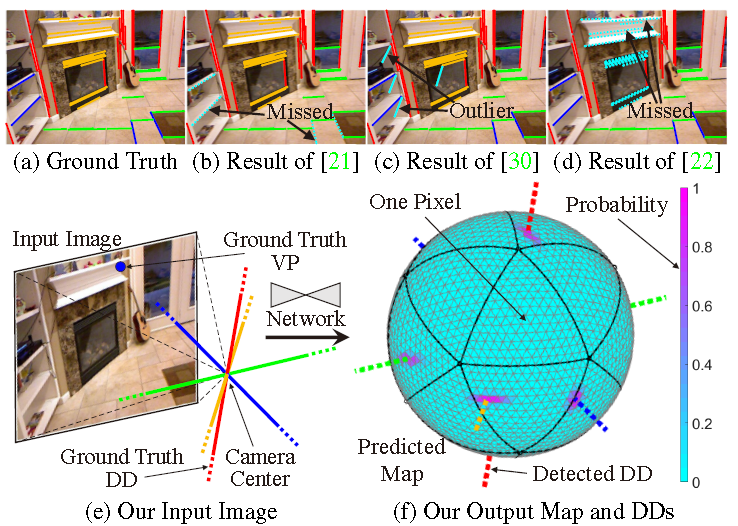

Existing vanishing point (VP) estimation methods rely on pre-extracted image lines and/or prior knowledge of the number of VPs. However, in practice, this information may be insufficient or unavailable. To solve this problem, we propose a network that treats a perspective image as input and predicts a spherical probability map of VP. Based on this map, we can detect all the VPs. Our method is reliable thanks to four technical novelties. First, we leverage the icosahedral spherical representation to express our probability map. This representation provides uniform pixel distribution, and thus facilitates estimating arbitrary positions of VPs. Second, we design a loss function that enforces the antipodal symmetry and sparsity of our spherical probability map to prevent over-fitting. Third, we introduce a strategy to generate the reliable ground truth spherical probability map. Our map unnecessarily peaks at noisy annotated VPs and also exhibits various anisotropic dispersions. It thus reasonably expresses the locations and uncertainties of VPs. Fourth, given a predicted probability map, we detect VPs by fitting a Bingham mixture model. This strategy can robustly handle close VPs and also provide the confidence level of VP that is useful for practical applications. Experiments showed that our method outperforms state-of-the-art approaches in terms of generality, accuracy, or efficiency.