Learning Depth from Endoscopic Images

– Conference Date : September 2019

– Category : Monocular Depth Prediction

– Place of conference : 3D Vision (3DV)

Abstract:

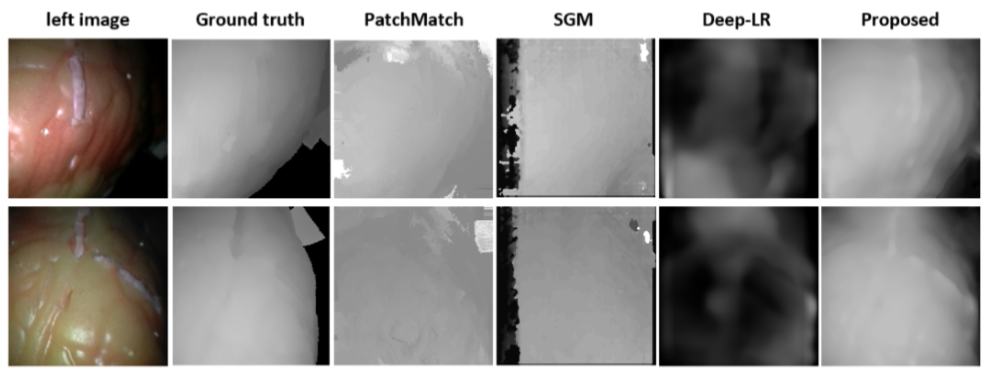

We propose an unsupervised approach to predict depth maps from images captured by a wireless endoscopic capsule. Recent advances in deep learning have shown that accurate depth maps can be predicted from a single image, where the deep network is trained via unsupervised or self supervised learning by using monocular video sequences or stereo image pairs. However, directly applying these techniques to endoscopic images does not yield satisfactory results owing to the inherent difficulties of the wireless capsule imaging such as dim lighting and low-resolution of images, which are different from normal imaging conditions. For that reason, we exploit the environmental characteristics of endoscopic images—there is no external light source except ones attached to the capsule. Based on this condition, we propose the direct attenuation model-based depth map prediction scheme to guide depth prediction and to add meaningful cues to the loss function. We experimentally verify the proposed method with various endoscopic images.