EventSR: From Asynchronous Events to Image Reconstruction, Restoration, and Super-Resolution via End-to-End Adversarial Learning

– Published Date : June 2020

– Category : Event Camera, Super Resolution

– Place of publication : IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)

Abstract:

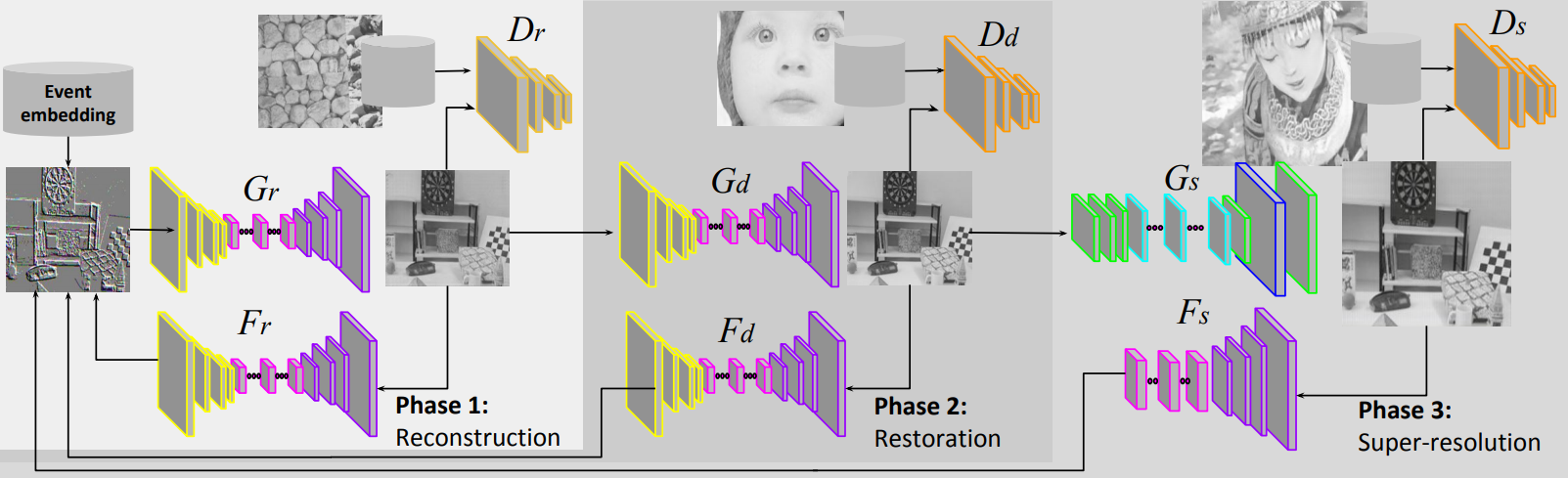

Event cameras sense intensity changes and have many advantages over conventional cameras. To take advantage of event cameras, some methods have been proposed to reconstruct intensity images from event streams. However, the outputs are still in low resolution (LR), noisy, and unrealistic. The low-quality outputs stem broader applications of event cameras, where high spatial resolution (HR) is needed as well as high temporal resolution, dynamic range, and no motion blur. We consider the problem of reconstructing and super-resolving intensity images from LR events, when no ground truth (GT) HR images and downsampling kernels are available. To tackle the challenges, we propose a novel end-to-end pipeline that reconstructs LR images from event streams, enhances the image qualities, and upsamples the enhanced images, called EventSR. For the absence of real GT images, our method is primarily unsupervised, deploying adversarial learning. To train EventSR, we create an open dataset including both real-world and simulated scenes. The use of both datasets boosts up the network performance, and the network architectures and various loss functions in each phase help improve the image qualities. The whole pipeline is trained in three phases. While each phase is mainly for one of the three tasks, the networks in earlier phases are finetuned by respective loss functions in an end-to-end manner. Experimental results show that EventSR reconstructs high-quality SR images from events for both simulated and real-world data. A video of the experiments is available at https://youtu.be/OShS_MwHecs.