Loop-Net: Joint Unsupervised Disparity and Optical Flow Estimation of Stereo Videos with Spatiotemporal Loop Consistency

– Published Date : February 5, 2020

– Category : Stereo Matching, Optical flow, Scene Flow

– Place of publication : 제32회 영상처리 및 이해에 관한 워크샵 (IPIU)

Abstract:

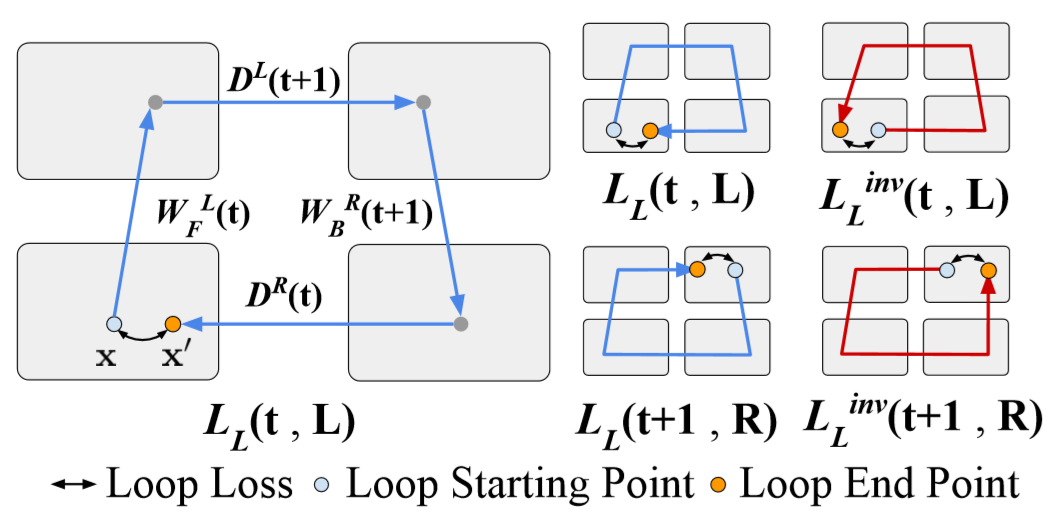

Most existing deep learning-based depth and optical flow estimation methods require the supervision of a lot of ground truth data, and hardly generalize to video frames, resulting in temporal inconsistency (flickering). In this paper, we propose a joint framework that estimates disparity and optical flow of stereo videos and generalizes across various video frames by considering the spatiotemporal relation between the estimated disparity and flow without supervision. To improve both accuracy and consistency, we propose a loop consistency loss which enforces the spatiotemporal consistency of the estimated disparity and optical flow. Furthermore, we introduce a video-based training scheme using the convolutional Long Short-Term Memory (c-LSTM) to reinforce the temporal consistency. Extensive experiments show our proposed methods not only estimate disparity and optical flow accurately but also further improve spatiotemporal consistency. Our framework outperforms the current state-of-the-art unsupervised depth and optical flow estimation models on the KITTI benchmark dataset.