EOMVS : Event-based Omnidirectional Multi-View Stereo

– Published Date : TBD

– Category : Multi-view Stereo

– Place of publication : IEEE Robotics and Automation Letters (RA-L) 2021

Abstract:

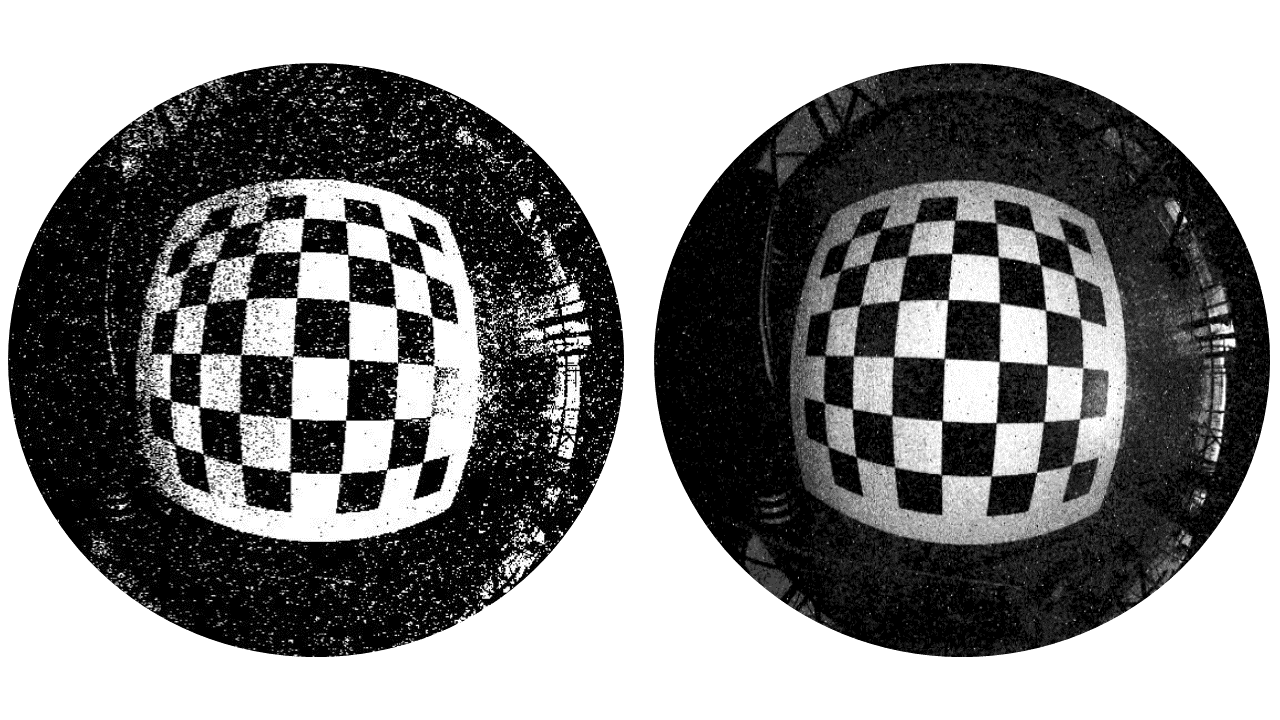

Event-based cameras sense changes in light intensity and asynchronously output event data. These cameras have the advantage of low latency, high dynamic range, and low power consumption over conventional cameras. Recently, thanks to such advantages, event cameras have been utilized for various vision tasks, such as depth estimation and object detection, under severe illumination changes and dynamic motion. Fisheye or omnidirectional cameras, on the other hand, have much wider field-of-view (FoV) allowing a more compact system for omnidirectional perception than using multiple normal-FoV cameras setups.

In this work, we propose a new multi-view stereo method, termed as EOMVS, to reconstruct 3D scene with wide view by using the event data captured by omnidirectional event cameras. The proposed EOMVS takes the advantages both event cameras and fisheye cameras. To validate our EOMVS method, we conduct experiments using both synthetic and real-world datasets and evaluate its performance qualitatively and quantitatively. Experiments show that the presented approach can accurately obtain 3D information for wide view.