E2SRI: Learning to Super-Resolve IntensityImages from Events

– Published Date : July 2021

– Category : Super Resolution, Event Camera

– Place of publication : IEEE Transactions on Pattern Analysis and Machine Intelligence (TPAMI), 2021

Atstract:

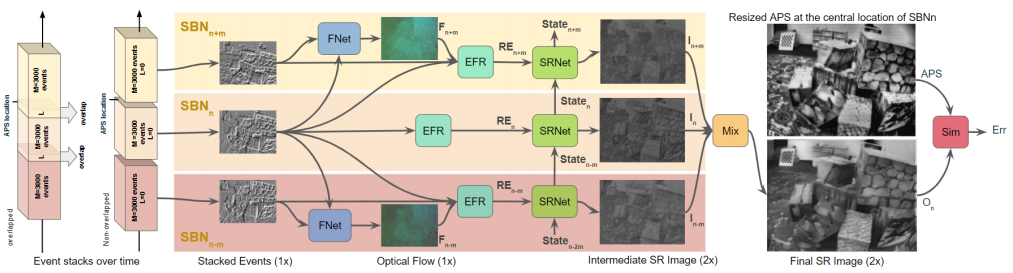

An event camera reports per-pixel intensity differences as an asynchronous stream of events with low latency, high dynamic range (HDR), and low power consumption. This stream of sparse/dense events limits the direct use of well-known computer vision applications for event cameras. Further applications of event streams to vision tasks that are sensitive to image quality issues, such as spatial resolution and blur, e.g., object detection, would benefit from a higher resolution of image reconstruction. Moreover, despite the recent advances in spatial resolution in event camera hardware, the majority of commercially available event cameras still have relatively low spatial resolutions when compared to conventional cameras. We propose an end-to-end recurrent network to reconstruct high-resolution, HDR, and temporally consistent grayscale or color frames directly from the event stream, and extend it to generate temporally consistent videos. We evaluate our algorithm on real-world and simulated sequences and verify that it reconstructs fine details of the scene, outperforming previous methods in quantitative quality measures. We further investigate how to (1) incorporate active pixel sensor frames (produced by an event camera) and events together in a complementary setting and (2) reconstruct images iteratively to create an even higher quality and resolution in the images.